IntelliMagic Vision for SAN -

Your Single Solution for Storage Management

Monitor the performance, capacity & configuration of your multi-vendor SAN infrastructure in a single view.

Reduce your costs and mean time to resolution, and safely get the most value out of your SAN infrastructure with built-in architecture specific intelligence and statistical anomaly detection.

Multi-Vendor Performance, Capacity and Configuration Monitoring

Storage Performance Management

Proactively Monitor and Optimize Your Multi-Vendor SAN Performance

HOW

IntelliMagic Vision’s built-in artificial intelligence proactively detects issues and potential bottlenecks developing inside your storage arrays that could cause delays in application performance and hurt your business if left undetected and vastly reduces mean time to resolution for issues.

Capacity Planning and Forecasting

Safely Predict Storage Growth and Avoid Capacity Constraints

HOW

Change detection charts identify utilization patterns that deviate from a 30-day baseline, allowing you to easily identify anomalous growth.

End-to-End Configuration Topology

Seamlessly Navigate from VMware Performance to Back-end Performance

HOW

IntelliMagic’s breakthrough integration of VMware performance and configuration data changes the game for SAN administrators. Visualize your entire infrastructure from the VMware guest through the ESX Host, to the connected fabric ports, and see the related switch ports and LUNs in a single view.

Key Features

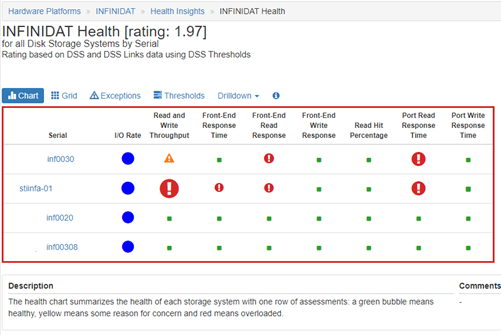

Proactive Health Insights

Automated Health Insights leverage hardware-specific AIOps functions to identify and prevent the most common storage and fabric performance and capacity issues.

Health Insights include time, multiple metrics, multiple components, and AI-rated metrics in a single view to provide preventive focused insights. In this case, this system is showing throughput constraints, front-end response time, front-end read response time and port read response time issues, indicating serious performance risk.

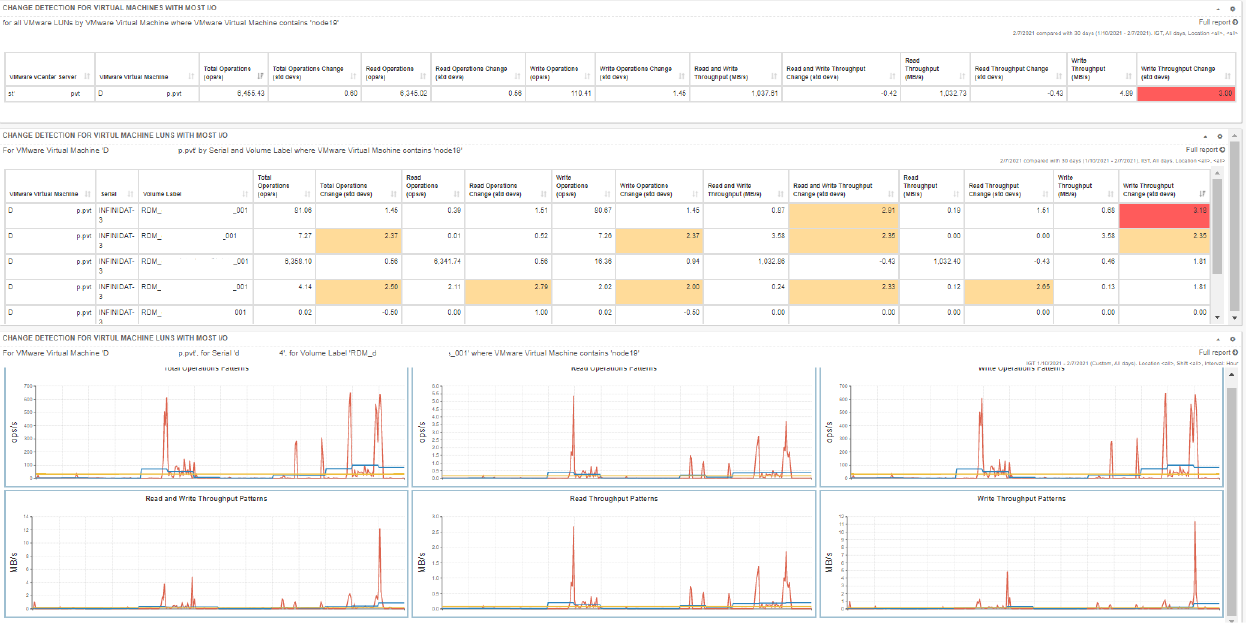

Advanced Statistical Analysis and Anomaly Detection

Advanced statistical functions provide performance and capacity anomaly detection. This allows you to not only see what has changed, but also determine the magnitude and significance of that change.

IntelliMagic Vision's Change Detection functions automatically identify significant workload changes in your Storage, Host (Cluster), Virtual Machine and Switch port environment so that you can investigate anomalies before they impact shared resources in the environment.

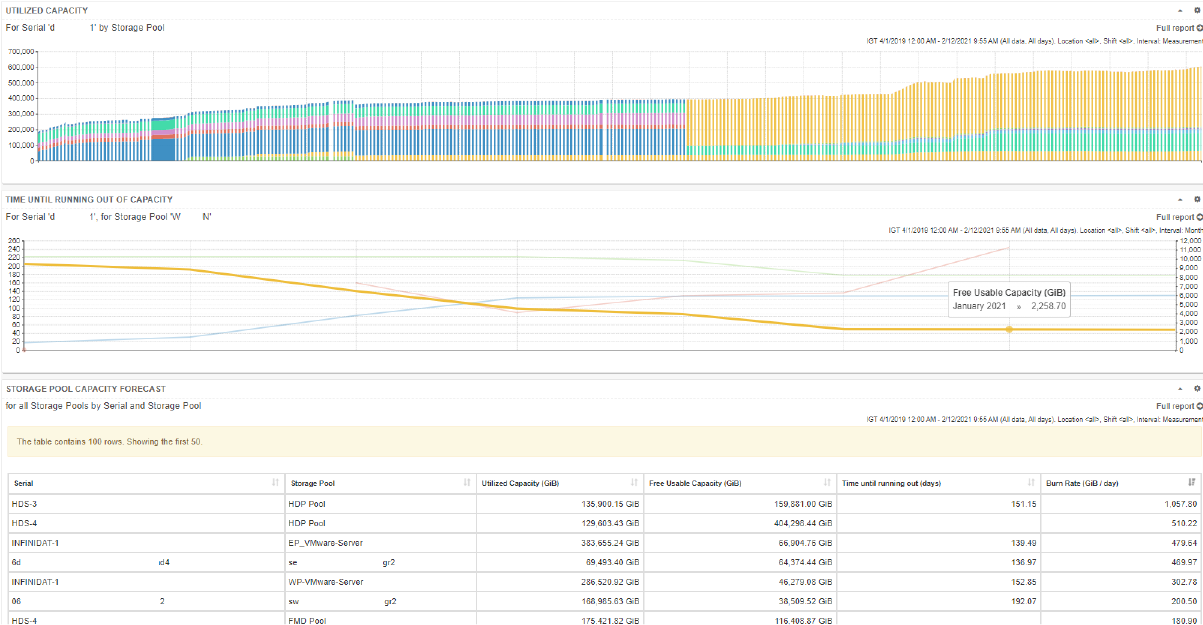

Accurate Capacity Forecasting

Capacity forecasting leverages AIOps functions to understand future storage capacity requirements and prevent capacity constraints.

IntelliMagic tracks key capacity metrics over time and calculates the consumption rate over time to provide a comprehensive view of the capacity growth and free space expiration at a storage system and storage pool level.

The number of days until running out of space can be leveraged to purchase more storage for the impacted storage system.

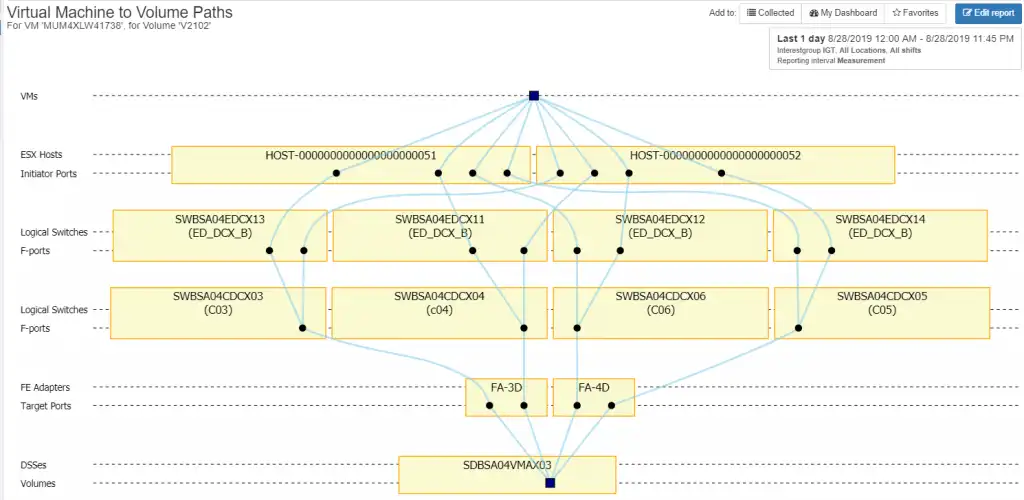

Configuration Analytics

IntelliMagic dynamically creates topology views which can be used for troubleshooting end-to-end connectivity. IntelliMagic also uses this technology combined with best practices to automatically identify orphaned ports, orphaned volumes, asymmetrical connectivity, single paths for ESX host and masking views, orphaned zones and others.

Configuration analytics visually constructs all of the hosts connected through the fibre channel fabric from the VMware guest to the back-end storage LUN and its associated disk drives and offers interactive drill downs and descriptions to easily learn and investigate.

AIOps via SaaS Delivery

Advantages to adopting a cloud model include rapid deployment, minimal on-premise setup, offloading staff resources required to deal with on-premise installation, operations and product maintenance, and easy access to IntelliMagic consulting services to supplement local skills.

Trial Our Advanced Statistical Analysis

See for yourself why leading companies are saying “I don’t know how to live without IntelliMagic Vision.” Whether you’re evaluating competitive solutions or trying to solve a problem, we’re happy to help you move forward with your IT initiatives.

Comprehensive Monitoring Across Your Storage Hardware, VMware, and SAN Fabric

Supported Hardware

IntelliMagic Vision collects measurement data from the storage arrays and SAN switches and supports the following platforms, attached to any host type such as Linux, Unix, AIX, Windows, VMware, etc.

Supported Data Sources

IntelliMagic Vision uses various API’s and native CLI commands to collect data from the storage arrays and switches.

Secure and Flexible Deployment and Monitoring

Continue Learning with These Resources

Brochures and Datasheets

White Paper

Webinar

- Analyst Webinar: The Business Value of Smarter Enterprise Storage Analytics (39:11)

- The Benefit of AIOps for storage infrastructure performance management (51:21)

- Storage System 101: Architecture, History, Trends, and Predictions (55:10)

- Components of an Effective Storage Infrastructure Health Assessment (46:30)

Request a Free Trial or Schedule a Demo Today

Discuss your technical or sales-related questions with our availability experts today