The deep experts on z/OS infrastructure performance are retiring in growing numbers. Their expertise cannot be replaced with new hires. When that golden day of retirement arrives for you, what will happen to that SAS and MXG or MICS reporting toolset that you spent decades developing?

Without a major infusion of talent and time, these types of reporting solutions architected many years ago will become obsolete. But their purpose is still as valid as ever. It is a primary means for the performance and capacity team to maintain the service levels required for the applications.

The impact of the loss of deep mainframe expertise is already a significant factor for many sites. And because of this reliance upon custom-made tool sets, the impact of skilled mainframe performance professionals retiring in waves will be especially devastating.

Maintaining and Interpreting MXG Reports is a Disappearing Art

Barry Merrill’s first book with SAS programs was published 39 years ago and was revolutionary for RMF and SMF performance reporting and analysis.

According to their website, MXG now supports nearly “every raw record on the face of the earth.” Because of its scope and coverage, MXG is used extensively throughout the industry for day-to-day problem analysis, performance monitoring, infrastructure capacity planning, and so on.

However, deriving value from SMF data using this methodology has always been heavily dependent on manual coding and human interpretation. You have to be a kind of “RMF and SMF data whisperer” to understand and maintain the vast library of customized code and reports to ensure it is kept up to date with new changes.

Knowing how to read all these reports and determine whether what they show is good or bad for your workloads is even more challenging. The current environments are getting larger and more complex, and staff levels are getting lower and lower. With co-workers retiring at an alarming rate, you are busier than ever. You need a faster way to get your answers. And you have probably already realized that it takes years to get even very smart new staff up to speed using one of these decades old performance reporting infrastructures.

As a performance and capacity expert, you may very well have spent your entire working career creating, maintaining and interpreting your home-grown reports. It is likely now becoming clear that when you leave, the new staff are not going to have the skills nor the time required to extend the life of this tool set.

A Modern Alternative to SAS & MXG or MICS

MXG paved the way for modern z/OS performance management. When the systems were a more manageable size and the teams large enough to handle the workload, coding new SAS reports not only created a sense of fulfillment but made staff levels are getting lower and lower. possible.

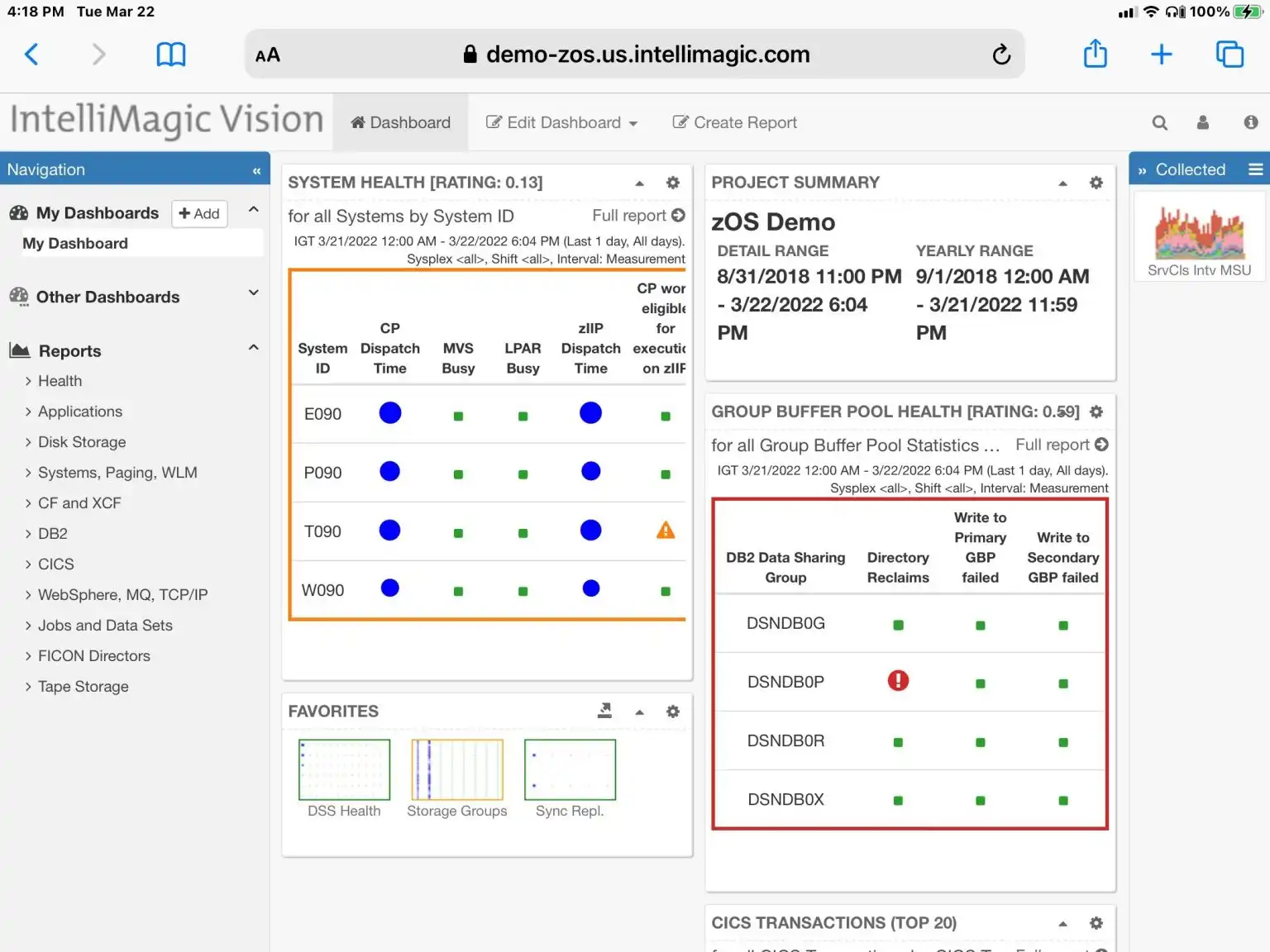

Interactive, web-accessible, z/OS performance management interface

However, in the age of ever-growing environments and complexities combined with reduced staff levels, it has become necessary to put the power of the machine to work even better, with smart analytics that require no coding and can automatically interpret the most important metrics. This greatly assists the new generation of human analysts as well as the experts that remain.

The problem is more than just people not having the skills to create and interpret the charts – it is also that there are simply too many metrics for humans to perform comprehensive checks on. It is so time-consuming and requires so much expertise to interpret the measurements that this is typically only done after production problems have already occurred, instead of the metrics being assessed pro-actively to find hidden issues.

The Power of Human Expertise versus the Power of the Machine

Human experts are so powerful because of their fundamental understanding of the environment, the ability to mentally combine information from multiple sources to draw conclusions, their insight in what is relevant and what isn’t, and their strong desire to find the ‘why’.

The weakness of us as humans however, apart from the fact that we will someday retire and take our expertise with us, is that we only have one brain and one pair of eyes. We are simply limited in our capacity to sift through vast amounts of metrics or charts, and we are not very good in repetitive work. This means that the larger and more complex the environment is, with more areas and metrics to review than ever, the less effective the human experts are becoming.

This is where the power of the machine helps. A software program can patiently process millions of SMF and RMF metrics in a matter of seconds. However, if we want the machine to intelligently assess the data to identify outliers and signs of potential issues and risks looming inside the z/OS infrastructure like a human expert would, the z/OS expertise and knowledge needs to be somehow packaged into the software.

This combination of digitized expertise and machine power removes the need to find the ‘needle in the haystack’ manually and enables proactive mainframe performance management that is otherwise completely unfeasible.

Interactive z/OS Performance Management

Another aspect that is not always present in the home grown tools is the flexibility to dynamically navigate through the metrics. In addition to the benefits of having pre-defined views, there is a lot of efficiency to be gained if those views can be easily customized on the fly, e.g. to add additional variables to analyze potential correlations, or to dynamically focus the analysis on the subset of data that needs to be examined in greater detail.

Many experienced SAS sites have implemented very sophisticated reports using MXG. However, often the process of adding even a single detailed static report into your batch report run can involve hours of work from an expert.

It saves time to use a solution with built-in z/OS expertise where all dynamic reports are already available, with the added flexibility to dynamically customize them or zoom into related data interactively with live queries driven only by a few clicks in the user interface. This not only helps you as the time-constrained expert, but also enables your new co-workers to come up to speed much faster.

Preparing for the Future

Sites will continue to take advantage of the support provided by MXG across the universe of SMF record types when special purpose ad hoc reporting is required. However, as the primary means to manage something as critical as z/OS infrastructure performance, it isn’t scalable given today’s team dynamics. Nor is the next generation likely to have the skills or time to be able to design support for new z/OS capabilities in the existing SAS code base.

We suggest taking a look at the modern z/OS performance analysis and capacity management solutions available on the market today to ensure that your lifetime legacy of a well-performing, continually available and optimized z/OS environment is handed over to the next generation successfully.

Learn about IntelliMagic’s solutions in this area

This blog was originally published in 2017 and was updated in 2019.

Related Resources

IntelliMagic Vision for z/OS Earns 2022 “Top Rated” Award for Mainframe Monitoring

May 11, 2022 | TrustRadius announced today that IntelliMagic Vision for z/OS has won the 2022 “Top Rated” Award in the Mainframe Monitoring category.

Colruyt Group IT opts for interactive mainframe analysis with IntelliMagic's expert knowledge

Colruyt Group IT needed out of the box reporting with built-in knowledge in the field of performance and capacity.

Modern Alternative to SAS Reports for SMF Reading and Mainframe Reporting

For decades, the only way to read SMF data was to create your own SAS code that would generate output from SMF that could be understood. Technological innovations such as AI has made writing your own custom SAS code for SMF reports unnecessary for all but the most esoteric reports.

Book a Demo or Connect With an Expert

Discuss your technical or sales-related questions with our mainframe experts today

Brent Phillips

Brent Phillips