Our story begins as our stories usually do, somewhere in the middle after the customer has been working on a problem, in this case low throughput to virtual tape, for a while and they are just about to give-up.

The customer had a group of tape jobs that frequently would not finish on time. On time means the jobs complete within the batch window. Not on time means they would run beyond the batch window and would compete with the online activity. Some days the job would run in less than an hour, while the same job on other days would run for 10 to 15 hours.

Low Throughput to VSM Tape Systems

The customer investigated the issue when the jobs ran long and found that the throughput to the VSM tape systems was low for the jobs in question. A joint investigation with Oracle was started under the assumption that the problem was caused by the VSM tape systems. My intel tells me that 6 people from the customer worked on the investigation for a whole month and Oracle was unable to find anything wrong from a hardware or software perspective.

The investigation was temporarily abandoned, and the issue was left open with the perspective that the customer would eventually be getting new hardware, possibly from a different vendor. Shortly after the investigation was temporarily abandoned, the customer began a trial run of IntelliMagic Vision.

IntelliMagic personnel were onsite for this trial helping install IntelliMagic Vision and setting up data collection. The customer has a complex environment with 4 sysplexes (12 LPARS) and over 1 petabyte of primary online disk storage. During a live customer demonstration of the IntelliMagic Vision product, the customer explained the issue in detail, and we did a joint investigation during the meeting, comparing the application tape profile between various days.

Knowing the Unknowable

We showed the mounts that each job would generate, and the customer’s conclusion was that the application should never require many tape mounts. We concluded that IntelliMagic Vision did not yet provide a conclusive answer, but did point towards issues in the application, not in the Oracle VSM.

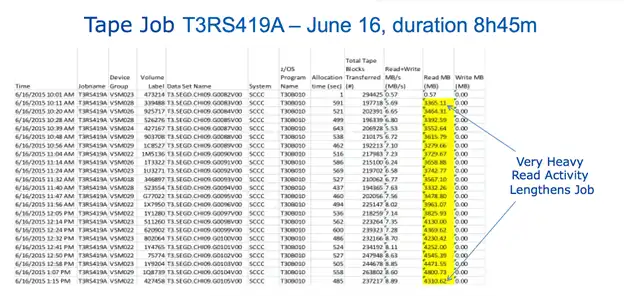

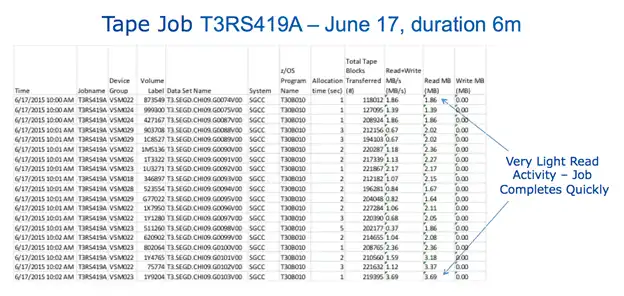

At night, after the meetings, we worked on product changes that would give conclusive answers to the issue at hand, such as improving the reporting of z/OS SMF tape data (adding some columns to a tabular report). The next morning, we introduced the product changes in the POC environment and reprocessed the data. We had a follow-on presentation with another group from the customer and showed them why the jobs were behaving differently on different days.

Using IntelliMagic Vision, it was extremely easy to see the behavior of the ill performing jobs, as far as tape activity was concerned. Based on the IntelliMagic Vision results, the customer knew exactly who had to fix the issue, and they turned to their application developers for design improvements.

Application Design Bug Led to Low Throughput

The problem was that on good days, each job would write 10 virtual tapes. On bad days, each job would write 150 virtual tapes. On top of that problem, the throughput to the VSM tape systems was low, not because of a problem in the VSM tape systems, but due to excessive processing in the application, causing low throughput to the virtual tapes.

Conclusion: This problem started with the assumption that the issue was with the hardware, but it turned out to be a bug in the application design. Having accurate measurements to describe the behavior of the hardware and the contribution of each job during execution provides a complete picture of the environment and can help to eliminate time and expense spent trying to fix the wrong problem.

This article's author

Share this blog

Related Resources

Banco do Brasil Ensures Availability for Billions of Daily Transactions with IntelliMagic Vision

Discover how Banco do Brasil enhanced its performance and capacity management with IntelliMagic Vision, proactively avoiding disruptions and improving cross-team collaboration.

Interactive FICON Topology Viewer Spotlights Configuration Errors

June 26, 2023 | IntelliMagic Vision's FICON Topology Viewer helps analysts identify configuration errors, ensure that the infrastructure is configured correctly, and reveal undesirable infrastructure changes.

How to Find Sick But Not Dead (SBND) TS7700 Tape Clusters

Rather than waiting for a remote VTS to fail, you should be reviewing these key TS7700 reports to determine if remote clusters are receiving replication data or not.

Book a Demo or Connect With an Expert

Discuss your technical or sales-related questions with our mainframe experts today

Dave Heggen

Dave Heggen