Computers are great at doing what we tell them. They don’t get tired, bored, or complain about the endless work we have them doing. The tasks that have been given to computers range from the apps on your mobile device to the DevOps efforts that have helpfully provided hundreds of shortcuts in how we manage the work coming into important processing infrastructure.

This blog attempts to:

- Highlight the existence of automation in not so obvious places

- Provide you with some practical guidance managing those workloads

- Help you identify the owner

What is the Underlying Noise?

On the day of the OJ Simpson verdict, Oct. 3, 1995, transaction rates plummeted at the Global Distribution System company I was working with at the time (Apollo), and I assume at many other sites around the world. The reason? Most humans with access to TV (150 Million) and radio were waiting with great anticipation for the verdict. It was very brief, maybe 5 minutes, but the remaining transactions occurring at that time were likely either made through automation or those oblivious to the trial.

I know this because the transaction rates at Apollo were carefully monitored and evaluated as we (the performance analysts) managed the performance of the online systems and subsystems. Travel agents were the primary users of Apollo’s system, so they were a bit ahead of the curve on automating repetitive sequences, but automation was making its way into businesses everywhere as Windows made doing so easier.

Turns out the OJ trial sparked my interest in the topic of automation.

Is Your Infrastructure on the “Stairway to Heaven”?

Other than being a classic Rock ‘n Roll song, some of those words may also represent the endless growth driving your infrastructure, and it’s hardly a heavenly outcome. It could be CICS, or DDF transaction rates, or something else. Even though CICS and DB2 are heavily instrumented, monitored, and analyzed, we still often see a disconnect between business growth and resource demand.

I have referenced this issue in my previous blog, Managing the Gap in IT Spending and Revenue Growth in your Capacity Planning Efforts, but this particular use case was not outlined. Let’s be clear. CICS and DB2 are often NOT the source of the problem, but rather victims of their own success.

The need for speed in our culture has also been a significant contributor to infrastructure demand.

For example, 30 years ago most of us were getting weather updates once a day in the newspaper or on the TV. Today, I can easily set a weather app on my phone to give me updates several times a day and more often, near real time updates of historical and forecast radar, when a Texas sized thunderstorm is passing through.

Information is power; fast information can be ‘instant’ power, and in some cases, can save lives. We shouldn’t dismiss the business value in speed, but needless repetition comes with a cost.

A good capacity planner should work to answer many questions, but they should also include:

- What is the overall requirement for ‘robotic’ activity on our systems?

- Is there a way to tune it out?

Let’s answer the second question first and then spend time evaluating some ways to help you be the hero as you unmask the hoard of robots and tune them out.

Using the WLM Policy to Reduce Impact of Lower Importance Work

One of the main competitive advantages for z/OS is the integration of workload priority into the operating system. X systems play ‘nice’, and Virtualization lets you share some resources disproportionately in the virtualization world, but nothing touches the breadth and depth of z/OS workload manager (WLM).

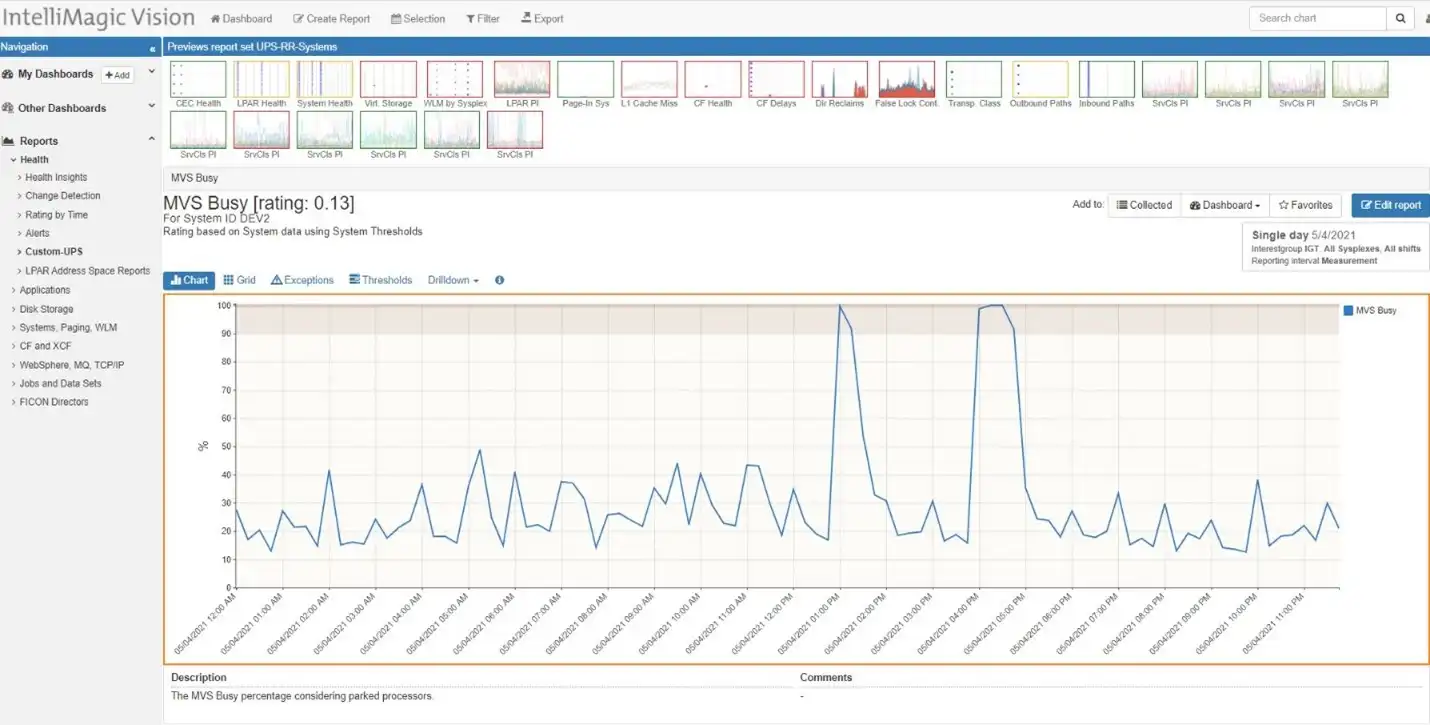

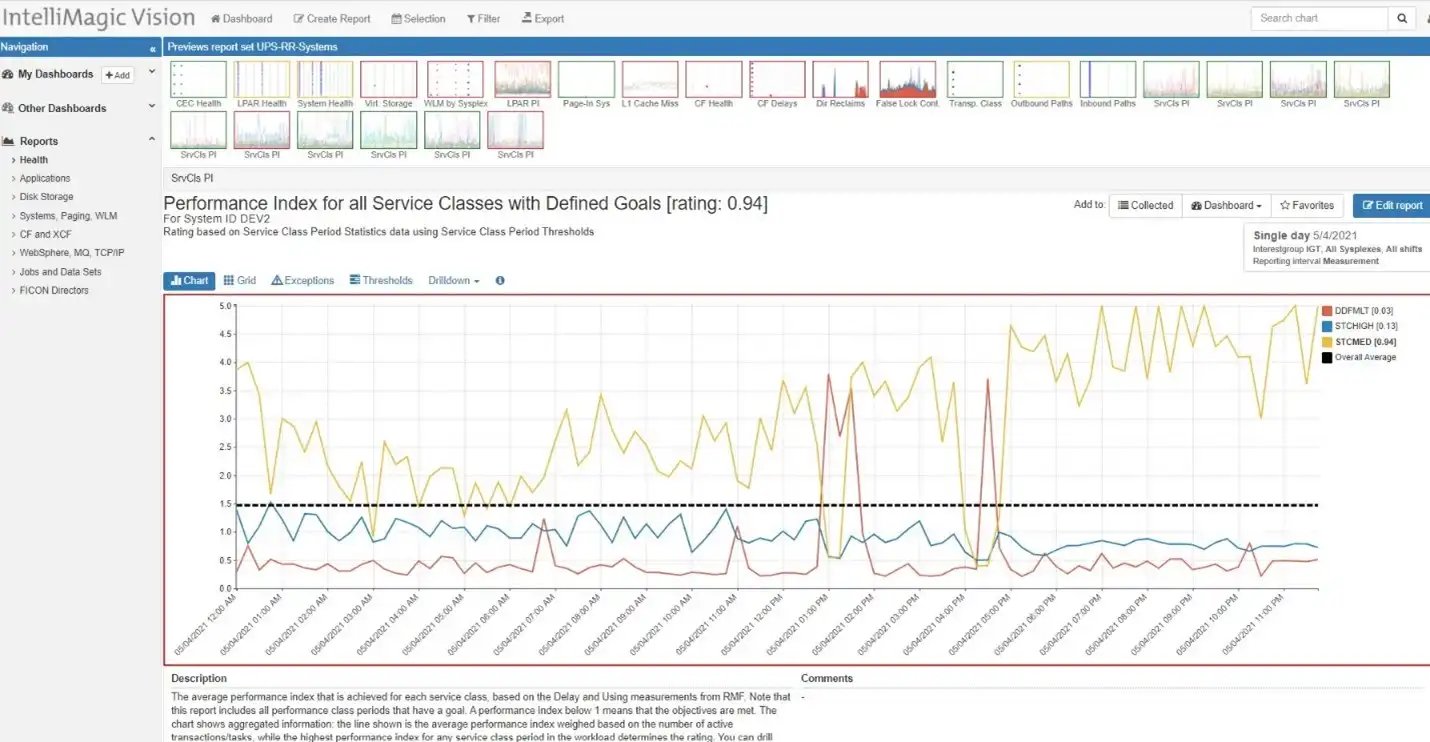

The views below from IntelliMagic Vision show that when the system is stressed (Figure 1), some workloads get better serviced, while another workload gets less service (Figure 2).

The performance index (PI) is one of the measures that demonstrates how WLM manages to dispatch priority in the z/OS operating system. A value of 1 tells us that we are getting the service according to our given priority, a value higher tells us we are getting less, and the dispatcher should favor me over those with lower priority. A PI value lower than 1 suggests that I am getting more than has been established compared to others and I’m a donor candidate when the system is resource constrained.

In Figure 2 above, the yellow and blue service classes get better service (PI goes down) during high demand (Figure 1), while the red gets less (goes up) since the priority of the red service class is lower.

As a z/OS performance analyst, you are probably aware of this. People are creative, and the computer doesn’t have to wait for a human to think about what to type and then type something in to get answer. So some work gets automated.

Specific transactions might be repeated on a specific interval driven by the source to obtain meta data on the transaction type, or to look for change in the output, so that next actions can take place or decisions can be made. Human creativity in assigning tasks to robots is why we have so many useful examples in our homes today. Assigning a legitimate robot a lower priority can be an excellent way to reduce impacts during peak demand.

But what if nobody cares about that automated 5 minute report anymore? What if the response time bots are no longer required because we have 16 other tools doing that work? Maybe it’s time to understand the usage a bit more. How many are there actually out there?

Hunting Robots

Unlike Will Smith’s character in the movie, iRobot, before we get carried away, we should work with the business since these robots haven’t destroyed their maker. The hunt can start by narrowing your search down. The example below provides us some answers to questions we might want to ask:

- Why is the transaction rate constant all day?

- Is it just one source?

- What is the source?

With a few dynamic reporting edits into a CICS transaction report, the details are easily exposed, and you can work with the robot owners to explain your inquiry.

This view also provides the basis for answering an important question: the cost. Why not have that answer ready when you make the call. A constant 120 seconds of CPU at 15-minute intervals is about 13% of one CP. This is a relatively small-scale example, but it is very likely there’s more to be found, and there are more robots being set up every day.

This could be one of the primary reasons you have unexplained growth in transaction rates and CPU demand. If your licensing model is Tailored Fit Pricing (TFP) then all consumption matters. If you’re still on 4HRA, it is unlikely this above consumption is not hitting the peak 4HRA. If you have 100 CPs, this is a very small impact, but you can do the math on your software agreement to quickly come up with one primary driver of the IT cost and you’ll be prepared to share that with the robot owner when you call. In doing the math, you may observe that this is pretty hefty process for an online transaction (8 CPU sec/tran). Is this staccato process testing more functionality than needed?

Eliminate, Reduce, or Prioritize?

First, ask the owner what is using the data every minute, then ask what the value of every minute is compared to every 30 minutes? One minute might be ‘convenient’, but if it is only needed once a day, why not make it once a day vs. once a minute and save some cash for IT work that will bring in higher margin business.

If it must be there, the next question should be: what is the priority compared to other online work? A CICS transaction will generally have much higher priority than batch work. As mentioned earlier, WLM provides various ways to prioritize workload in the system, and there may be a convenient method to define this as discretionary CICS work. This may not save as much resource, but at least you are helping steer the workload out of the way of higher priority work when resources are thin.

Other Considerations

These principles and methods can also be applied to DDF work and other workloads. When the pattern that emerges consistently is similar to a looping process, it may just be an innocent robot doing its job and there may be no business loss to slowing it down.

Vastly improved networks, access to vast cloud resources along with easy to use scripting have created some real problems with ‘autobots’. I’ve observed this situation when ‘new prospective’ business partners used multiple cloud-based sources to ‘check’ the scalability of production applications. The business is going to pursue new revenue sources aggressively, and we should be prepared to gate this type of activity with WLM thereby preventing these activities in production.

Want Advice?

As you can see from the examples above, there are real opportunities. IntelliMagic Vision is a great gateway to optimizing your systems. If you’re interested in learning more, feel free to start a free trial or schedule a demo with one of our consultants. We would be happy to assist you with this valuable work.

This article's author

Share this blog

You May Also Be Interested In:

Top ‘IntelliMagic zAcademy’ Webinars of 2023

View the top rated mainframe performance webinars of 2023 covering insights on the z16, overcoming skills gap challenges, understanding z/OS configuration, and more!

What's New with IntelliMagic Vision for z/OS? 2024.2

February 26, 2024 | This month we've introduced changes to the presentation of Db2, CICS, and MQ variables from rates to counts, updates to Key Processor Configuration, and the inclusion of new report sets for CICS Transaction Event Counts.

Why Am I Still Seeing zIIP Eligible Work?

zIIP-eligible CPU consumption that overflows onto general purpose CPs (GCPs) – known as “zIIP crossover” - is a key focal point for software cost savings. However, despite your meticulous efforts, it is possible that zIIP crossover work is still consuming GCP cycles unnecessarily.

Book a Demo or Connect With an Expert

Discuss your technical or sales-related questions with our mainframe experts today

Jack Opgenorth

Jack Opgenorth