A few years ago, I was at a prospect’s site that had (Dell) EMC Vplex and I saw on their cubicle wall a hand-written note with the number for Vplex support help: 1-800-SVC-4EMC. At the time EMC was apparently trying to compete with IBM’s SVC, now Spectrum Virtualize. Based on interactions I’ve had with customers over the years, the install base seems to be shrinking but is still significant.

I have a lot of previous experience with IBM’s Spectrum Virtualize solution. While it is similar to the EMC Vplex architecture, there are some key differences and I will explain some of those differences in this blog. In a follow up blog, we will look at a use case where we use the performance and configuration data to help troubleshoot a performance problem on an EMC Vplex.

Architectural Differences Between EMC Vplex and IBM Spectrum Virtualize

While both the IBM Spectrum Virtualize and EMC Vplex solutions virtualize back-end storage, EMC focused on solving for synchronous non-disruptive replication of primary site devices. IBM on the other hand focused on centralized management of a heterogenous storage environment that often consisted of some pretty low-end, simple back-end storage hardware. The (Dell) EMC strategy seemed to focus on maintaining sales of their high-end and feature rich VMAX boxes so the EMC Vplex solution focused on the disaster recovery layer.

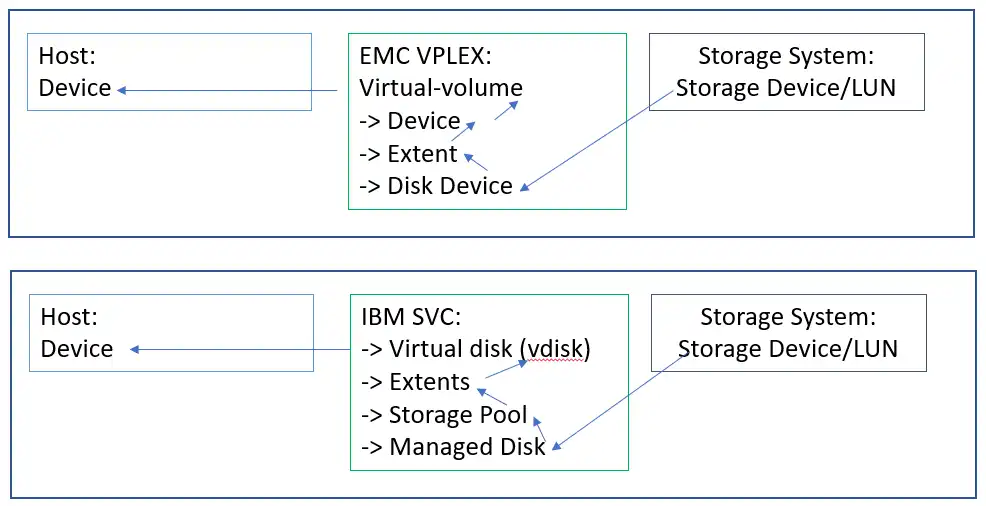

In both the IBM SVC and EMC Vplex, the storage volumes are derived by connecting to and using external back-end storage devices/volumes. These storage volumes are added to managed devices on the IBM SVC and EMC Vplex. This is where the differences between the platforms arise.

On IBM, these storage devices are managed as a pool of devices. The pool then breaks up the storage into extents that range in size depending on the size of the pool but are typically smaller than the back-end managed disks. The extents in the pool are grouped together to provide LUNs to hosts consisting of extents from multiple back-end storage devices.

On EMC Vplex, the back-end storage LUNs are seen by the Vplex, passed through a couple of virtualization layers, and eventually presented as volumes to the hosts. One of these layers is the extent layer but I have never seen Vplex use the extents from multiple back-end devices as in the case of an IBM SVC pool.

In a Vplex there is typically a 1:1 relationship between the extents, the back-end volumes and the front-end virtual volumes that are presented to hosts as shown in Figure 1: Vplex vs. SVC Architecture.

Figure 1: Vplex vs SVC Architecture

While the hosts do not connect to the back-end devices directly, there is a 1:1 relationship to the virtualized devices presented to the hosts and the back-end devices. In this sense, there is no pool of extents like in an IBM SVC environment. The pooling of extents takes place on the back-end storage system.

EMC Vplex Measurement

EMC Vplex provides a couple of ways to gather information. They provide a REST API and a CLI that allow you to interact with the performance logging and perform tasks such as pulling the log files and XML configuration file. The actual implementation details of the data collection are not particularly user friendly so ideally you will use a very simple collector that hides all the details, allowing you to focus on the analysis.

Key Components/Metrics

The EMC Vplex consists of a cluster of four Directors. Each Director contains CPU, Cache, Front-end Ports and Back-end Ports. EMC Vplex includes but is not limited to the following metrics:

Front-end Port Metrics: Read IOPS, Write IOPS, Total IOPS, Read Throughput, Write Throughput, Total Throughput, Read Response Time, Write Response Time, Total Response Time.

Director: CPU utilization is provided at the director level.

Calculated and Aggregated Port Metrics: All the Front-end Port metrics above can be rolled up to the Cluster and Director levels. Port utilization can be calculated / estimated based on the activity and the rating of the port.

Volume Metrics: Total I/O Rate, Read Throughput, Write Throughput, Overall Throughput, Read Response Time, Write Response Time.

Calculated and Aggregated Volume Metrics: You can use the EMC Masking views to accumulate device level metrics to the masking views related to the devices. This provides a view into the host performance of the systems associated with the EMC Vplex.

Summary of Part 1

So far in this blog we have looked at the architecture of EMC Vplex and how it differs from the IBM Spectrum Virtualize solution. The primary difference is that the Vplex doesn’t use the backend devices to create a high-performance pool from which to carve out LUNs, rather, it assumes the back-end devices are already high performing and have the appropriate performance characteristics. We also discussed the key performance metrics for an EMC Vplex. In the next blog we will take a look at an actual performance issue on an EMC Vplex.

This article's author

Share this blog

Related Resources

How to Manage Performance in Dell EMC VPLEX Environments

Managing performance in a virtualized storage environment can be tricky. See how you can manage performance not only on the front-end Vplex, but its back-end arrays.

Improve Collaboration and Reporting with a Single View for Multi-Vendor Storage Performance

Learn how utilizing a single pane of glass for multi-vendor storage reporting and analysis improves not only the effectiveness of your reporting, but also the collaboration amongst team members and departments.

A Single View for Managing Multi-Vendor SAN Infrastructure

Managing a SAN environment with a mix of storage vendors is always challenging because you have to rely on multiple tools to keep storage devices and systems functioning like they should.

Request a Free Trial or Schedule a Demo Today

Discuss your technical or sales-related questions with our availability experts today

Brett Allison

Brett Allison