[This blog introduces an article I wrote for Cheryl Watson’s Tuning Letter 2020 No. 2. Follow this link to read the entire article on “Learning From SMF – MQ Accounting,” republished with permission from Watson & Walker.]

Learning from SMF Data

The Tuning Letter article is one in a series, designed to introduce you to insights that are available through SMF data into various areas across the z/OS infrastructure. As indicated in my earlier ‘Learning From SMF – MQ Statistics’ article, having easy visibility into SMF data facilitates learning about how various z/OS components are actually operating in your environment. While we are all experts in some areas, it is likely that we are novices in other technologies, where our learning can be enhanced through insights gained from SMF data.

This article continues the MQ theme by investigating data available in MQ Accounting (SMF type 116) records, which provide detailed metrics at many levels. MQ performance specialists rely heavily on this valuable data to investigate application problems and carry out performance tuning. The very interesting (and voluminous) MQ Accounting data is generated by specifying the MQ “START TRACE(ACCTG) DEST(SMF) CLASS(3)” command, which results in MQ producing SMF type 116 subtype 1 and 2 records.

Challenges Accessing MQ Accounting Data

Even though MQ is widely used across today’s z/OS environments, and its SMF data offers rich potential insights for managing and operating MQ, there can be challenges to gaining visibility into, and deriving value from this data.

Challenge 1: Lagging Reporting Capabilities

One challenge is that reporting capabilities for MQ SMF data have often lagged those of other aspects of the z/OS infrastructure. This is due to the limitations of available tooling, the need to learn unfamiliar tooling that is siloed by area, and constraints in the time and expertise required to develop in-house programs.

These are general obstacles that can be applicable to all types of SMF data. But based on input from customers and conversations with attendees at conference sessions, these areas appear to be particularly challenging for MQ, which has historically not received as much focus for in-house-developed reporting.

Challenge 2: Generating MQ Records is Considered Expensive

Another challenge specific to MQ Accounting metrics is that generating these records is often considered to be too expensive, both in CPU overhead and SMF record volume. As a result, many sites only enable MQ Accounting data on an exception basis. However, we believe that these concerns may be overstated.

Estimates in earlier IBM presentations indicated that enabling MQ Accounting data resulted in a 5-10% overhead. But in a recent blog, IBM’s Lyn Elkins estimated the CPU cost of generating this data to be a bit lower; in the 3-7% range. Also, keep in mind that MQ is typically not a high CPU consumer in the first place.

Our own experience with analysis of comparative SMF data volumes for one moderately large site indicates that MQ Accounting data may be more manageable than often believed. The volume of MQ Accounting records at this site was comparable to their CICS Transaction data (SMF 110 records), and only 15-20% of the size of their Db2 Accounting data (SMF 101 records). Both of these latter two record types are generated continuously at many sites.

Challenge 3: Lack of Visibility into MQ Accounting Data

It is also possible that a lack of good visibility into MQ Accounting data may be another factor contributing to a reluctance to generate it, the sentiment being “even if we collected this data, what would we do with it?” This article intends to help answer that question.

The bottom line is that it may be more feasible and beneficial to generate MQ Accounting data than you previously thought. A starting point could be to generate this data on a periodic basis, both to establish a workload baseline and to measure your own SMF data volumes and CPU overhead, and then proceed from there.

Interpreting and Deriving Value from MQ Accounting Data

As was the case with the preceding article on MQ Statistics, the sample reports and views of MQ Accounting data in this article have been created using IntelliMagic Vision, which provides an intuitive interface to easily explore this data, and the ability to dynamically drill down to view relationships between various metrics.

Some of the key metrics provided in the MQ Accounting data are:

- elapsed time by MQ command

- elapsed time by component of MQ activity

- CPU time

- command rates.

These metrics can be analyzed from several perspectives, including by queue name, connection type, connection (address space) name, buffer pool, and queue manager, as will be shown in the examples that follow.

CPU Time Reporting

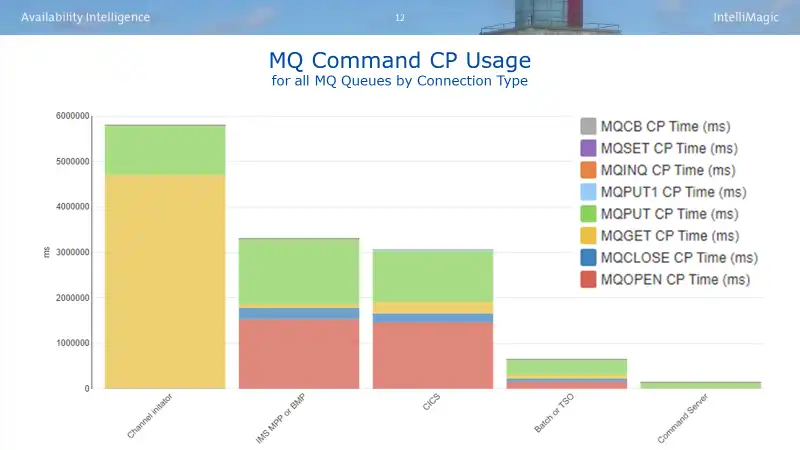

One of the primary areas of interest from the MQ Accounting data is likely to be CPU usage. In the example found in Figure 1, the three primary drivers of MQ CPU are channel initiators and work arriving from IMS and CICS.

CPU and Elapsed Time Reporting by MQ Call

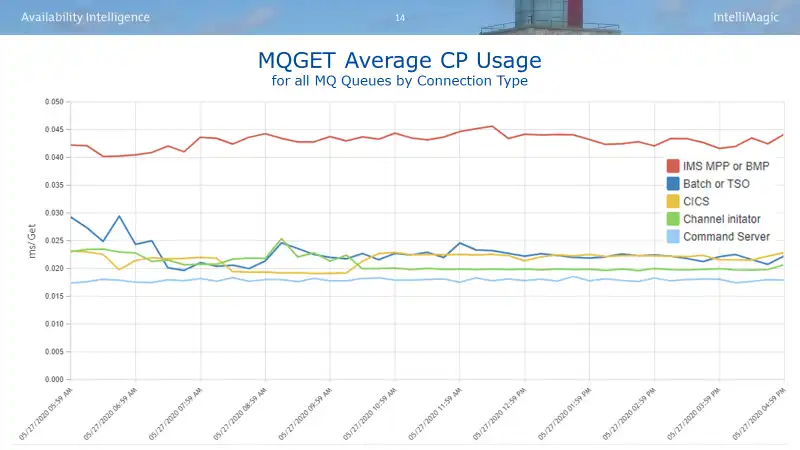

An analyst may also want to view metrics such as CPU and elapsed times on a per call basis. Again, this analysis can be further focused by queue name, queue manager name, buffer pool, or connection type (the latter will be illustrated here).

Selecting MQGET commands to illustrate this analysis, we might begin by viewing CPU time per MQGET call by connection type. As is also the case with Db2 accounting data, the type of work issuing MQ calls can lead to distinct profiles, as seen in Figure 2. Though the absolute numbers are small, analysts in this environment would observe that the CPU per MQGET for requests coming from IMS is approximately double that of the other connection types.

“Learning From SMF – MQ Accounting” Full Article

The two examples in this blog have just scratched the surface of the potential insights provided by MQ Accounting data into the operation and performance of your MQ infrastructure, one that is largely untapped in many sites. I hope it has been sufficient to arouse your interest in reading the entire article as published in Cheryl Watson’s Tuning Letter.

MQ Accounting - Learning From SMF

This article is designed to introduce you to the types of insights that are available through SMF data with a focus on the SMF 116 MQ Accounting data. After reading, you will have a better understanding of how MQ functions.

This article's author

Share this blog

Deriving Insights from SMF 115 MQ Statistics Data

Deriving Insights from SMF 115 MQ Statistics DataRelated Resources

What's New with IntelliMagic Vision for z/OS? 2024.2

February 26, 2024 | This month we've introduced changes to the presentation of Db2, CICS, and MQ variables from rates to counts, updates to Key Processor Configuration, and the inclusion of new report sets for CICS Transaction Event Counts.

Viewing Connections Between CICS Regions, Db2 Data Sharing Groups, and MQ Queue Managers

This blog emphasizes the importance of understanding connections in mainframe management, specifically focusing on CICS, Db2, and MQ.

What's New with IntelliMagic Vision for z/OS? 2024.1

January 29, 2024 | This month we've introduced updates to the Subsystem Topology Viewer, new Long-term MSU/MIPS Reporting, updates to ZPARM settings and Average Line Configurations, as well as updates to TCP/IP Communications reports.

Book a Demo or Connect With an Expert

Discuss your technical or sales-related questions with our mainframe experts today

Todd Havekost

Todd Havekost