Significant obstacles to implementing zHyperLink

Sure, the published response time expectations for zHyperLink are amazing: 20 microseconds or less. For those of us who have been in the mainframe industry for years, that is a remarkable number. Without a doubt, the far-reaching changes across the hardware and software stack required to pull this off represent a phenomenal technological accomplishment.

But achieving this blazing response time requires some very significant trade offs, specifically for data center configurations and replication solutions. In the data center, zHyperLink requires direct point-to-point connectivity between processors and storage frames. That rules out the use of FICON directors, which have greatly simplified data center connectivity from the old “bus and tag cable” days. In addition, zHyperLink limits the maximum distance between processor and storage frame to 150 meters.

When it comes to replication, many of today’s strategies rely heavily on synchronous replication between storage frames to ensure continuous availability despite storage frame failures and data center environmental incidents. But synchronous replication is not supported with zHyperLink since the time required for the frame-to-frame communication protocol to replicate writes would exceed its service time “budget”.

Impact of significant obstacles hindering adoption of zHyperLink – less than anticipated

With these significant data center configuration and replication strategy obstacles, many (including this writer) anticipated a very slow adoption rate. After all, how many sites are experiencing business workload constraints arising from I/O latency to the extent that it would warrant the “pain” associated with overcoming the obstacles described above? To the extent that the attendees of our recent IntelliMagic zHyperLink webinar are representative of the industry, zHyperLink adoption may be more rapid than anticipated.

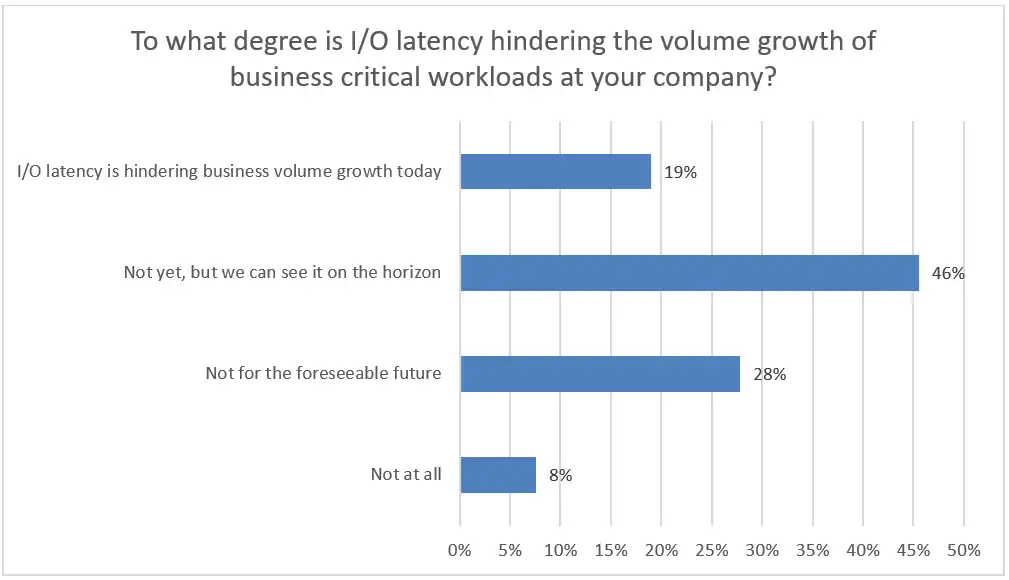

Question 1: Impact of I/O latency

We asked our webinar audience two questions, to which more than 70 responded. The first pertained to the degree to which I/O latency was hindering business volume growth. After all, if you don’t or won’t have the disease, there is no need to endure a painful procedure from your doctor.

As you can see from this chart, well over half the respondents in this webinar indicated that I/O latency is now or will soon be hindering volume growth of business-critical workloads in their environments. This differs from the common perception that I/O issues are a problem of the past. Despite all the improvements that have been made in I/O performance, including the recent proliferation of flash storage, more than half of these respondents remain concerned about I/O performance.

Since many of these sites are facing the type of I/O constraints that zHyperLink is designed to relieve, perhaps they would be willing to expend the effort required to overcome the previously described data center and replication hurdles.

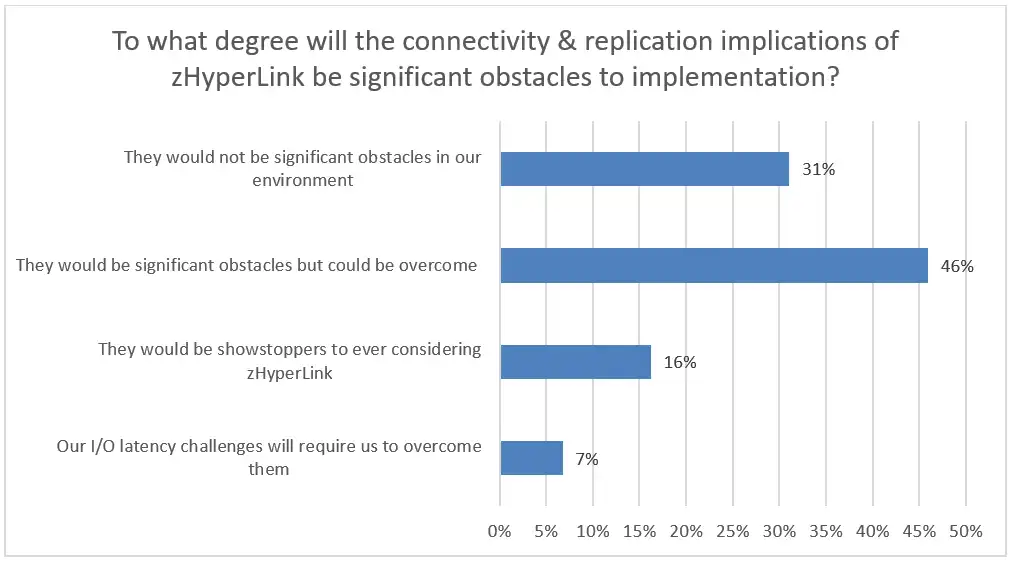

Question 2: Willingness to “push through the pain” to “achieve the gain”

That was exactly the scope of the second question. How significant did our audience believe those connectivity and replication obstacles would be to implementing zHyperLink at their sites?

The response to this question was even more favorable to zHyperLink adoption. More than 4 of 5 respondents indicated that the connectivity and replication obstacles could or would be overcome.

Looking ahead to zHyperLink adoption

Though today only Db2 reads are eligible and will only be successful zHyperLink “sync I/Os” if they are cache hits, software and hardware enhancements are expected to significantly expand the subset of I/Os that can benefit from zHyperLink in the near future. But today’s connectivity and replication limitations are likely to remain in place. The fact that this audience was motivated to overcome those restrictions (due to the need to overcome I/O latency constraints), and was optimistic that overcoming those restrictions was feasible, bodes well for near-term industry adoption of zHyperLink.

If you haven’t already, check out our webinar on the topic of zHyperLink, or read our white paper, zHyperLink: The Holy Grail of Mainframe IO?

This article's author

Share this blog

Related Resources

Profiling zHyperLink Performance and Usage

In this blog, we demonstrate how to profile zHyperLink performance and usage by reviewing one mainframe site’s recent production implementation of zHyperLink for reads, for Db2.

What's New with IntelliMagic Vision for z/OS? 2024.2

February 26, 2024 | This month we've introduced changes to the presentation of Db2, CICS, and MQ variables from rates to counts, updates to Key Processor Configuration, and the inclusion of new report sets for CICS Transaction Event Counts.

Viewing Connections Between CICS Regions, Db2 Data Sharing Groups, and MQ Queue Managers

This blog emphasizes the importance of understanding connections in mainframe management, specifically focusing on CICS, Db2, and MQ.

Book a Demo or Connect With an Expert

Discuss your technical or sales-related questions with our mainframe experts today

Todd Havekost

Todd Havekost